CALIFORNIA – With protests against the killing of George Floyd continuing to swirl throughout the country, facial recognition technology is coming under fire. Law enforcement agencies, including the Federal Bureau of Investigation and Los Angeles County Sheriff’s Department, use such systems to identify possible criminals by matching a person’s facial features from photos or videos to existing databases.

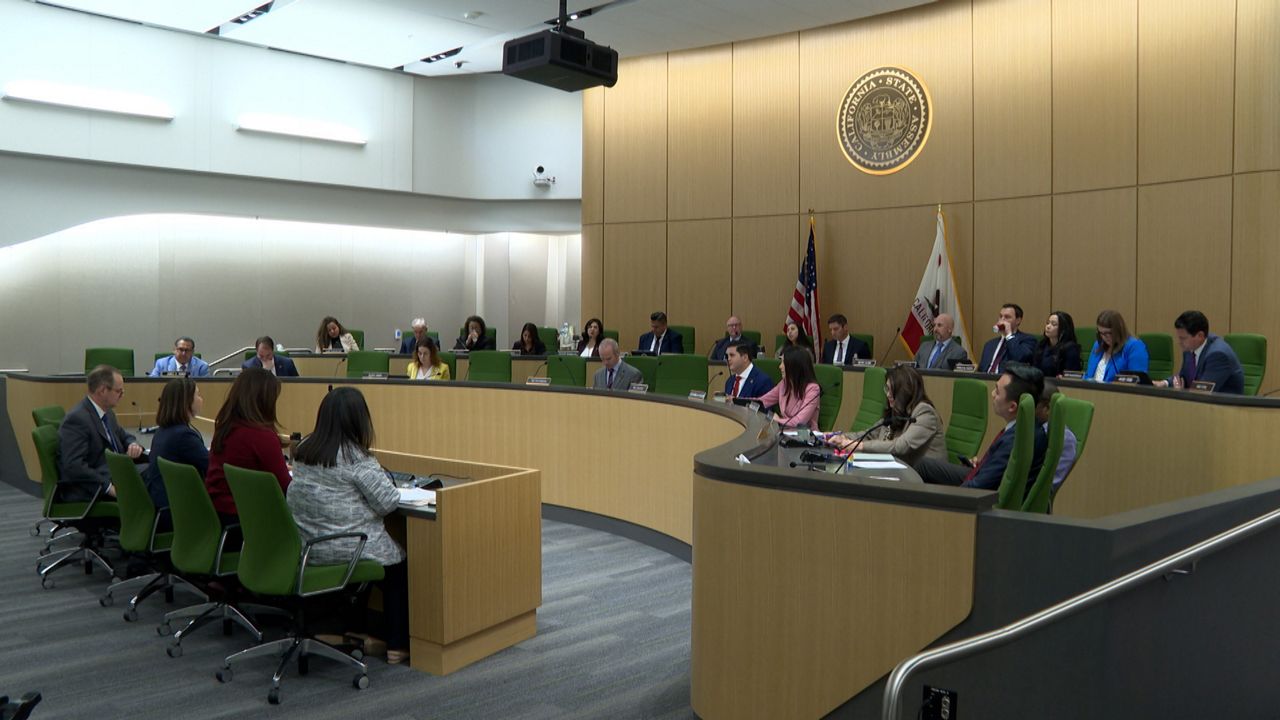

But a proposed law designed to hold the users of that technology accountable failed to get out of the California Assembly’s Appropriations Committee Wednesday, when AB2261 was put on hold. The bill sought to regulate the technology’s use by commercial, as well as state and local, public entities, with mandates for individual consent, a “human review” process and biennial accountability reports to an as-yet-unnamed state agency.

“During times of crisis, history has demonstrated that civil liberties and privacy rights are often temporarily suspended for the alleged public interest, only for those intrusions to become permanent,” said the bill’s author, California Assemblyman Edwin Chau (D-San Gabriel Valley).

Critics, however, said the bill did nothing to deter racial profiling and lacked true oversight.

“Our concern is that facial recognition technology further adds to surveillance and intelligence gathering,” said Hamid Khan, a coordinator with StopLAPDSpying.org, an activist website that ran an aggressive online campaign to stop the bill. “Looking at who’s impacted the most as a result of these surveillance technologies, it becomes an additional tool for racial profiling.”

Khan cited the error rate of facial recognition technology, the accuracy of which is largely dependent on the clarity of the photos and videos that are used, according to the National Institute of Standards and Technology, the federal laboratory that develops standards for new tech. That clarity is often compromised by real-world conditions -- when a person may not be looking directly at a camera, for example, or is somehow obscured by surrounding objects.

The NIST found there is wide variability in the accuracy of such systems from company to company.

There is also implicit bias. In a study released last year, the NIST said it found empirical evidence that most facial-recognition algorithms employ demographic differentials that worsen accuracy based on a person’s race, gender or age. It found facial recognition systems misidentified African American and Asian people up to 100 times more often than white men.

“The hidden bias of these systems is that if you're basing it off humans, humans have a lot of bias,” said Vinhcent Le, a technology equity attorney at the Greenlining Institute, a multiethnic, multidisciplinary think tank in Oakland, Calif. “If you’re teaching a facial recognition system to recognize what good faces look like, that’s very subjective and there needs to be stronger oversight to make sure you’re taking the right steps and examining your data to make sure you’ve eliminated bias as best we can.”

The Greenlining Institute was one of roughly 50 groups that joined with the American Civil Liberties Union to sign an opposition letter to AB2261.

“We are grateful that the Assembly Appropriations Committee recognized the shortcomings of this bill. AB 2261 was opposed by a broad coalition of civil rights organizations, labor groups, public health experts and technology scholars,” said Matt Cagle, technology and civil liberties attorney with the ACLU of Northern California. “We are excited to continue working with these partners to create a California free from face surveillance.”

Whether it’s from individual cell phones, drones or security cameras, video footage is everywhere. And it’s increasingly being used by facial recognition technology companies and the businesses and other entities that work with them. A California Assembly Appropriations Committee analysis of AB2261 said the New York company Clearview AI had allowed law enforcement to access its database of almost 3 billion biometric images collected through facial recognition technology.

“Facial recognition technology is something we’ll be developing for the long term. We should be thinking about long-term solutions to shape how it’s being created and used and not just reacting,” said Charles Belle, executive director of the Startup Policy Lab in San Francisco and a non-residential fellow at Stanford University’s Center for Internet and Society.

AB2261 is being held in the Appropriations Committee suspense file, pending review of its financial implications. The last time California attempted comprehensive regulation of facial recognition technology was in 2001 with Senate Bill 169, which failed to pass.